In a previous article we addressed how, despite having machine learning, digital merchandisers are forced into manually managing synonyms. We showed how it is tedious, laborious, non-scalable – and error-prone. It can result in hours and hours spent (or more accurately, wasted) by your team in judging the value of a given synonym extraction.

The reason this approach can go wrong isn’t because your merchandisers and product managers lack outstanding knowledge of your catalog. They know it and the industry inside and out. The problem isn’t them, it’s manual curation itself.

And why is that? Because we don’t trust machine learning algorithms. And with all the noise about bias, AI ethics, and black boxes – it’s understandable. So how can you overcome your Artificial Intelligence (AI) trust issues – and, should you?

Why Hands-on Curation Continues

In Ecommerce, synonym management to close vocabulary gaps has attracted a lot of attention recently, but vendors don’t just stop at offering up synonyms for approval. They offer the same “opportunity” for stemming and typo corrections, placing on merchandisers the heavy burden of approving or declining other suggestions generated by machine learning.

This just pulls your merchandisers away from running your business – and burdening them with rudimentary tasks instead.

Yet for some reason, we are back to the ’00s with manual curation being touted as a best practice by certain vendors. There is an insidious reason why: Manual curation lets humans control the machine.

And as SmartInsights points out: The maxim “retail is detail” requires, by definition, a hands-on approach. If that’s the case, why on earth would you run the risk of letting a computer loose on such an important job?

And the answer is: machine learning algorithms done right are better at predicting, recommending, and personalizing relevant experiences. So how do you know if they are “done right?”

Choose an AI Player You Can Trust

Not long ago, Gartner Analyst Mike Lowndes pointed out that “merely using AI is no longer differentiating,” as pretty much all vendors now “use machine learning or other forms of AI to enhance their products.” We agree: using AI isn’t enough. You need to partner with a known leader in innovation and AI modeling – for ecommerce – and the unique challenges ecommerce companies face.

When selecting an AI partner, best bet was to assess their intellectual property (IP) strategy. But patents on software have become harder to get since a 2014 Supreme Court ruling that merely implementing an idea on a computer isn’t enough to make it patentable. Plus, many players in the AI research community are philosophically opposed to the idea of patenting AI concepts.

For instance, Mark Riedl, a professor at Georgia Institute of Technology currently on leave to work at Salesforce’s AI research group in Palo Alto, says patents on fundamental machine-learning techniques make him uneasy. Even Apple, which has a notorious culture of secrecy, has recently launched a machine learning journal and enabled its researchers to publicly share findings at top ML conferences, including NeurIPS.

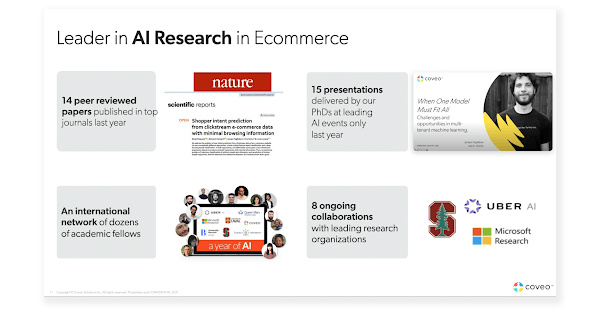

However, patents aside, you can see a company’s commitment to research and innovation through published papers in scientific publications. In this spirit, our colleagues here at Coveo conduct ground-breaking scientific research to power cutting-edge innovation. Our PhDs are prolific data scientists that publish research in top outlets in the field. They’ve published in Nature’s journals and top AI and NLP journals and conferences (e.g. RecSys, SIGIR) alongside researchers from Amazon and Netflix.

They also teach, present their work, and engage with the relevant research community. In fact, we’ve established a network of collaboration with researchers from the best universities and organizations in the world.

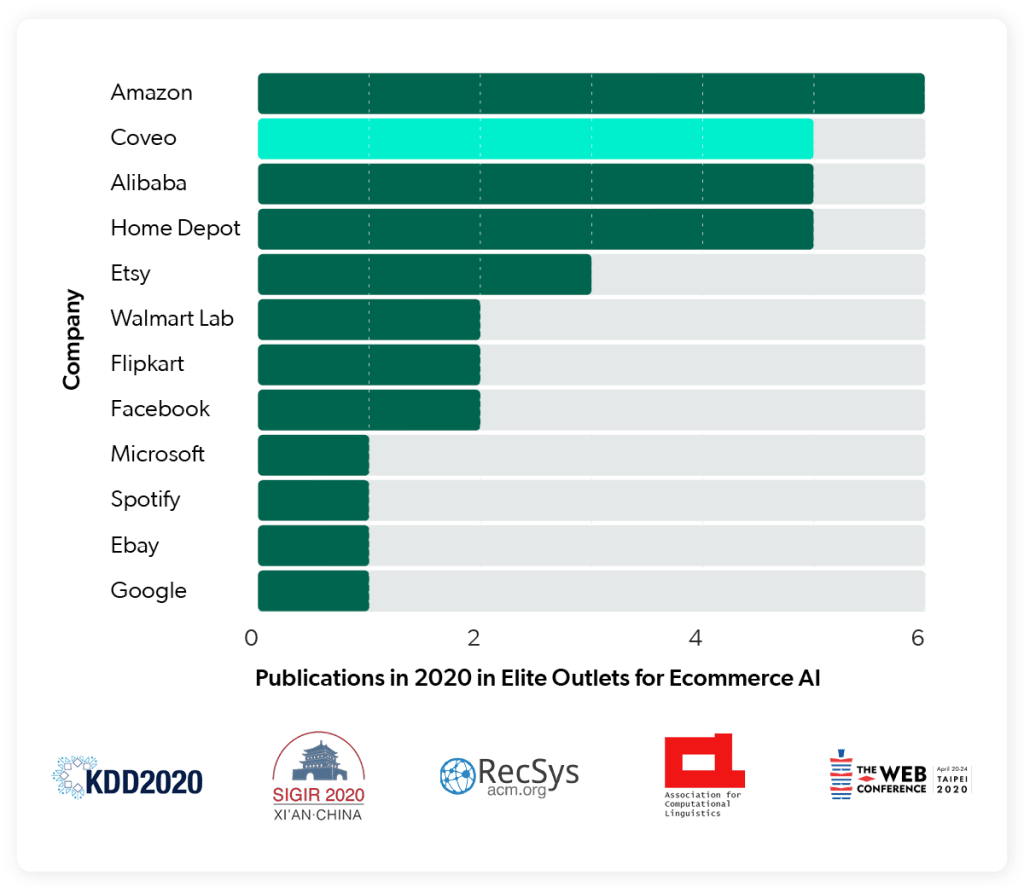

In fact, in 2020, according to the number of papers published in peer-reviewed journals, Coveo was the second most prolific AI Research player after Amazon and ex aequo with Alibaba and Home Depot. Some of those outlets are identified on the left of the chart below.

Seek Transparency, Not Evasion

In way too many cases, vendors are just slapping a sticker that says AI or ML on their products. And when AI and ML are presented as mysterious “black boxes,” it’s hard to trust. As it turns out, one of the barriers to AI adoption and a driver of mistrust is precisely this opacity and lack of “explainable AI.” People (often understandably) struggle to trust the decisions and answers that AI-powered tools provide.

There is culturally an inherent fear of the unknown surrounding technology, too. Trustworthiness involves conveying the nature of the AI models being used.

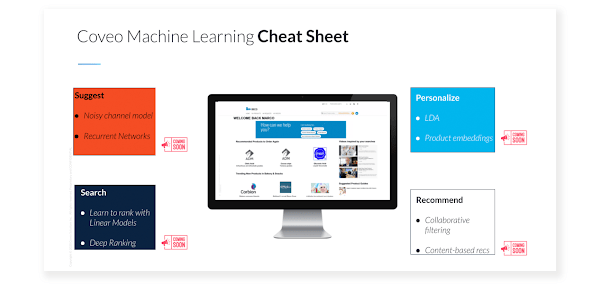

Being able to explain the inner workings of AI to key stakeholders helps businesses overcome trust issues and use AI technology to its full potential. For example, at Coveo we have witnessed significant benefits from being able to detail and explain the models that support our innovation efforts in ecommerce search.

Educating and enabling customers is one of our key missions and one of the key reasons why we started our Coveo AI Labs is to bring prospects and customers into our world to better understand the inner workings of the different AI techniques and approaches they are using and benefiting from.

For example, we’ve been writing extensively on product embeddings, an AI model that has brought Coveo’s personalization capabilities to the finest level. Coveo builds a product space converting word2vec to prod2vec. By browsing sessions we can build a dense representation of products that form a “space:” similar products (shown below) will be “close” in the space. This space can be built from just tracking data, with no human label or intervention, completely unsupervised.

The idea is that your customers reveal preferences and interests when moving from one product to the other. You can predict what’s more relevant to them because products can be considered like a space.

Explaining tech and AI/ML with metaphors, examples, and visual illustrations always helps. For example, it is helpful to illustrate that the product space created using product embeddings resembles scenarios everyone is actually familiar with. In fact, this is actually not too different from what we would see in a department store, where different products are close to each other.

Probably an even better representation of this is provided by the mannequins below, which clearly illustrate a running “theme,” showing several related products which are all relevant to a shopper interested in items for running.

Always Be Testing

Although pretty much every vendor can say they do AI, different technologies and solutions differ in their effectiveness. So, the key question is whether you can tell the difference in performance. That’s arguably the most important driver of differentiation.

To help organizations accept ML, it’s useful for teams to conduct A/B tests by observing both the outcomes of the AI solution under consideration. For example, at Coveo, we’ve had A/B tests against just about all of our competitors and we consistently win.

Our view is that barriers to Artificial Intelligence adoption and trust can be overcome with the following recommendations: choose a leading AI player you can trust, that can clearly explain to you the models and technologies being used and proves its value through A/B tests.

Bottom line is, of course, you want to ensure that you return the most relevant products to fulfill your shoppers’ goals. But placing the onus of ensuring Relevance on merchandisers means bringing Search & Discovery technology back to the late nineties.

The ideal approach is an AI-driven one where nothing is manual—except setting the business goals you wish to achieve. A truly AI-driven approach gains intelligence and relevance from the fuel of your shopper behavioral signals. No manual intervention or continuous control over the outputs of machine learning should be required.